Client overview

Our client is developing a computer vision solution designed to recognize and classify drill bit markers from visual data. These markers are critical for identifying drill bit types, specifications, and usage categories in construction environments.

The model requires precise visual inputs to differentiate drill bit markers under various lighting conditions, angles, and levels of wear. Because drill bits have detailed edges and irregular shapes, simple bounding boxes were not sufficient. The project required 2D polygon annotation to accurately trace the contour of each drill bit marker.

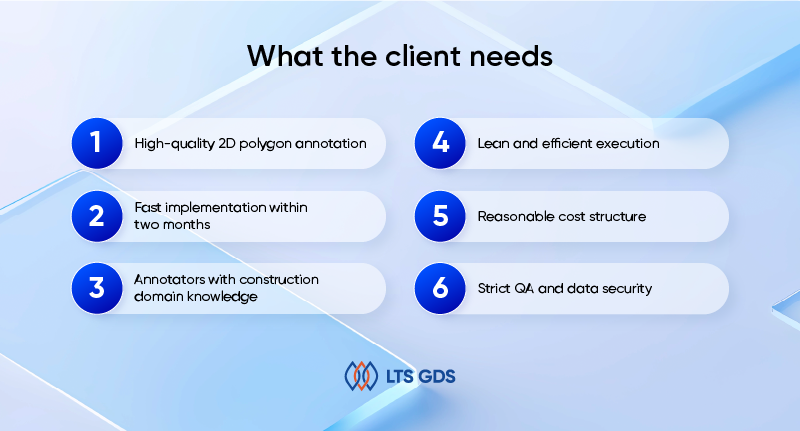

What the client needs

The requirements were straightforward and practical.

High-quality 2D polygon annotation

Each drill bit marker had to be outlined accurately using polygon annotation. Clean edges and tight boundaries were necessary to ensure strong model training performance.

Fast implementation within two months

The dataset had to be completed quickly. The timeline was fixed and non-negotiable.

Annotators with construction domain knowledge

Because drill bits vary by structure, material, and marker type, the team needed basic understanding of construction tools to avoid confusion between similar shapes.

Lean and efficient execution

The client preferred a streamlined process without unnecessary layers or delays.

Reasonable cost structure

The solution needed to balance speed, quality, and budget.

Strict QA and data security

All data had to remain secure within the client’s annotation environment, with a structured QA workflow to control errors.

How we did it

The project covered 10,000 images and approximately 25,000 drill bit marker objects. Given the short timeline of two months, we focused on preparation, specialization, and structured quality control from day one.

1. Requirement alignment

Before production started, we conducted a detailed alignment session with the client. We clarified:

– Exact polygon boundary requirements

– Whether to include shadows or reflections

– How to handle partially visible drill bits

– Rules for overlapping tools

– Minimum pixel threshold for small markers

Since only one main class needed annotation – drill bit – the focus was not on class complexity but on boundary precision. Thus, we documented all instructions and converted them into internal guidelines for effective training sessions.

2. Selecting annotators with construction domain expertise

The client required team members who understood construction tools. This was important because:

– Drill bits can appear similar but differ slightly in structure

– Some markers may be worn or partially hidden

– Industrial images often contain visual noise

We selected 15 annotators, prioritizing those with prior experience in construction-related or industrial datasets to carry out this project.

3. Lean production workflow

Because the timeline was limited to two months, the workflow needed to be efficient. We structured the process as follows:

– Image batches were distributed daily.

– Annotators completed 2D polygon outlining for each drill bit marker.

– Self-check was mandatory before submission.

– QA review followed immediately after each batch.

We avoided unnecessary approval layers. Communication was kept direct and fast through Kakao Talk, which allowed real-time clarification when edge cases appeared.

4. Precision-focused 2D polygon execution

Polygon annotation requires careful control of edge placement. Annotators were instructed to:

– Follow the exact contour of the drill bit marker

– Avoid overly simplified shapes

– Avoid excessive polygon points that create noise

– Maintain consistent vertex density across similar objects

If ambiguity appeared, the case was flagged immediately. The QA lead provided clarification to prevent repeated mistakes.

5. Multi-layer quality assurance

To maintain consistent results across 10,000 images, we applied a structured QA model.

Layer 1: Self-review: Annotators checked their own polygon shapes and class assignment before submission.

Layer 2: Cross-check: Another annotator reviewed a sample of completed images to detect missed objects or inaccurate boundaries.

Layer 3: QA lead review: The QA lead conducted vertical checks across batches to identify systematic issues.

Layer 4: Final validation: Before delivery, final sampling was performed to ensure compliance with the client’s quality expectations.

Whenever recurring issues were identified, we conducted short retraining sessions. This prevented small problems from scaling across the dataset.

6. Security and report

All annotation work was performed directly within the client’s tool. No data was downloaded, copied, or transferred outside the secured environment. Access was limited to authorized team members only, ensuring full confidentiality throughout the project.

To keep the project on track, we provided regular progress reports covering production volume, quality metrics, and any issues that required clarification. This allowed the client to monitor performance closely and make adjustments when needed.

At the end of the project, we delivered a final summary report outlining total output, accuracy rate, challenges encountered, and improvement points. This helped both teams review performance and identify areas for optimization in future collaboration.

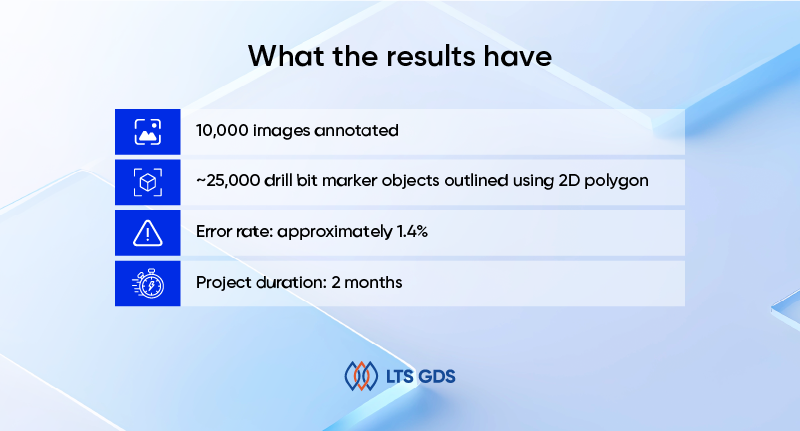

What the results have

Within the two-month timeline, we delivered:

– 10,000 images annotated

– ~25,000 drill bit marker objects outlined using 2D polygon

– Error rate: approximately 1.4%

– Project duration: 2 months

Despite the tight timeline, quality remained stable across the full dataset.