Client overview

Our client is developing a computer vision system that requires precise identification of multiple object types within structured images. The system depends on accurate annotation to detect and distinguish different components placed inside defined areas.

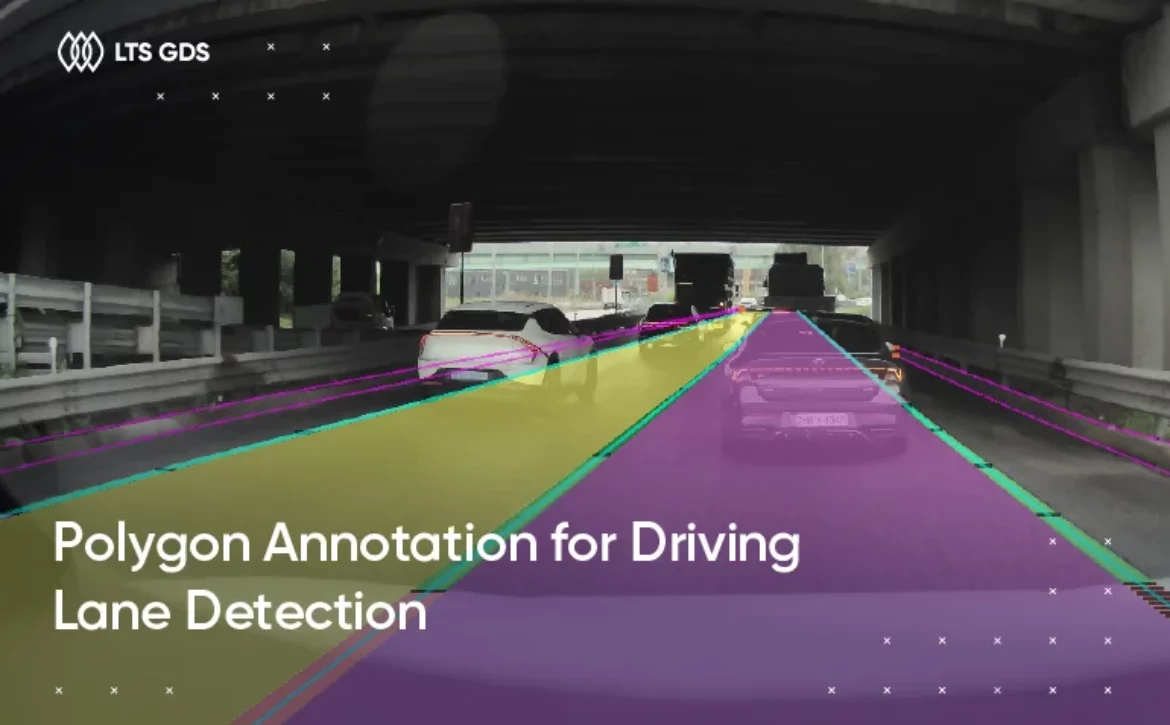

Unlike general object detection tasks, this project required detailed labeling of components that appear within a boxed region. The model needed to understand not just the presence of items, but their exact shape and boundaries. For that reason, 2D Segmentation was selected instead of simple bounding box annotation.

What the client needs

The client’s requirements were clear from the beginning.

High-quality 2D segmentation

Annotators needed to identify and precisely segment multiple fashion-related components inside a defined box area. Clean masks were required to support accurate model training.

Multiple component classes

There were nine components in total. The core classes included top, under, one-piece, shoes, bag, hat, eyewear. Each component had to be segmented accurately, even when overlapping or partially occluded.

Lean and efficient deployment

The client wanted a streamlined setup. The project had to start quickly and run smoothly without unnecessary overhead.

Strict QA and data security

All annotation work had to follow a clear quality control framework and remain within the client’s secured environment.

How we did it

The project ran over five months, covering 6,000 images and approximately 50,000 objects. From the beginning, we focused on building a stable workflow that could maintain consistent quality over time.

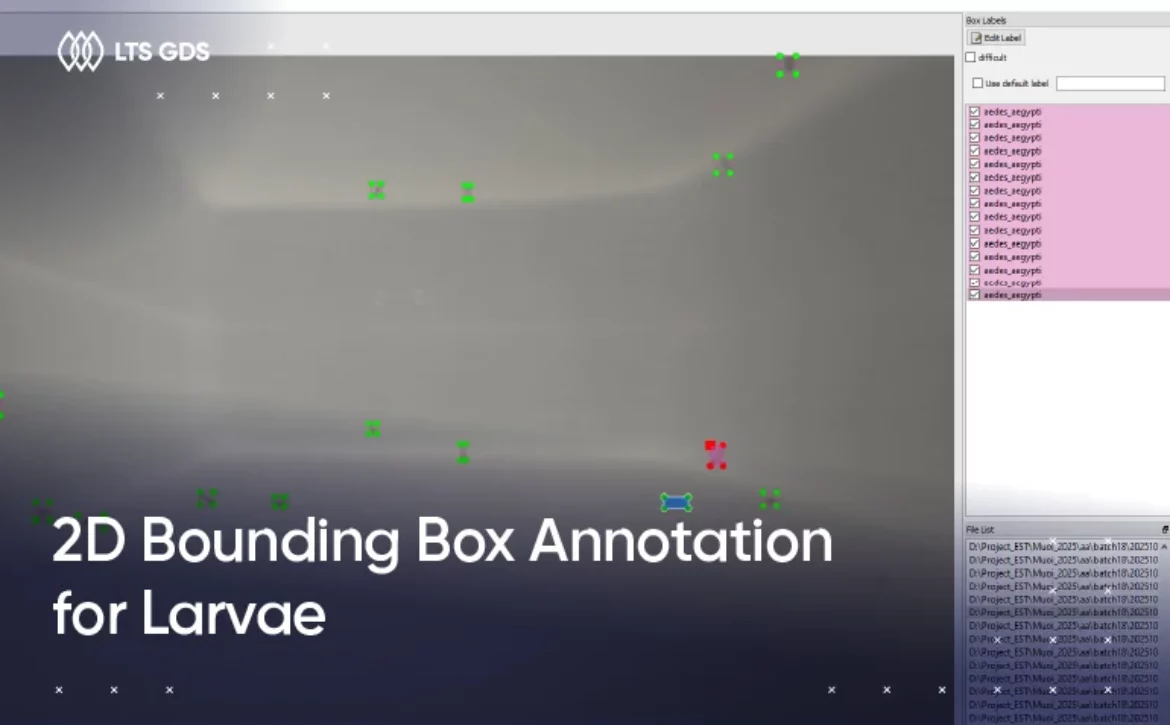

1. Defining segmentation rules for component boundaries

Before launching production, we aligned with the client on detailed segmentation rules. We clarified:

– Exact boundary expectations for each component

– How to handle overlaps between items (for example, bag straps crossing clothing)

– Treatment of partially visible items

– Whether small accessories attached to items should be included or excluded

Since all components were located within a boxed region, we confirmed that annotators should segment only items fully or partially inside that area.

2. Building a lean segmentation team

We assigned a team of 10 annotators with prior experience in pixel-level annotation tasks. Because the client required lean and efficient execution, we avoided overcomplicating the structure. The team included:

– Annotators responsible for segmentation

– A QA lead overseeing quality checks

– A project coordinator managing communication and reporting

– We conducted short calibration tasks before full production began. This helped us verify:

– Mask accuracy and edge handling

– Correct class selection

– Consistency across different annotators

– Only after alignment was confirmed did we move to full-scale annotation.

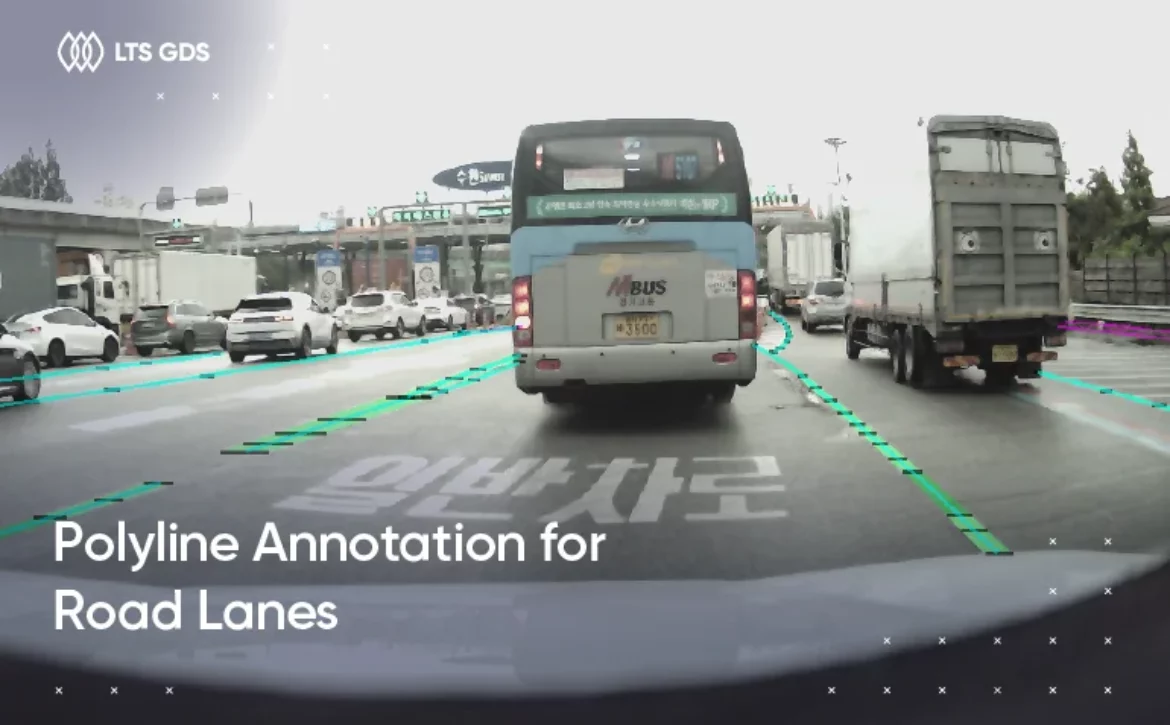

3. Structured 2D segmentation execution

Our process followed a controlled workflow. Firstly, annotators segmented each component at the pixel level, and then they needed to check it themselves before submission. Then, these labeled datasets were applied to QA to deliver high-quality outputs for our client. Especially, it is necessary to:

– Clean mask edges without gaps or rough shapes

– Avoid over-segmentation (capturing only relevant pixels)

Progress tracking was managed through Google Sheets, where we monitored image counts, object counts, and quality metrics.

4. Multi-layer quality control throughout the project

Maintaining consistency over five months required a structured QA approach. We implemented a four-step QA framework:

– Self-check: Annotators reviewed their own segmentation masks before submission.

– Cross-review: Another team member checked for missing objects or incorrect masks.

– Vertical review: QA leads reviewed samples across batches to detect recurring issues.

– Final inspection: A final validation pass ensured deliverables met the client’s acceptance criteria.

5. Consistent delivery

The project spanned five months, so maintaining steady performance was essential. We delivered annotated batches regularly rather than waiting until the end of the timeline. This allowed the client to validate outputs early and adjust requirements as needed. Additionally, we shared regular updates on image volume, object counts, and QA error trends, which ultimately improved the overall output quality.

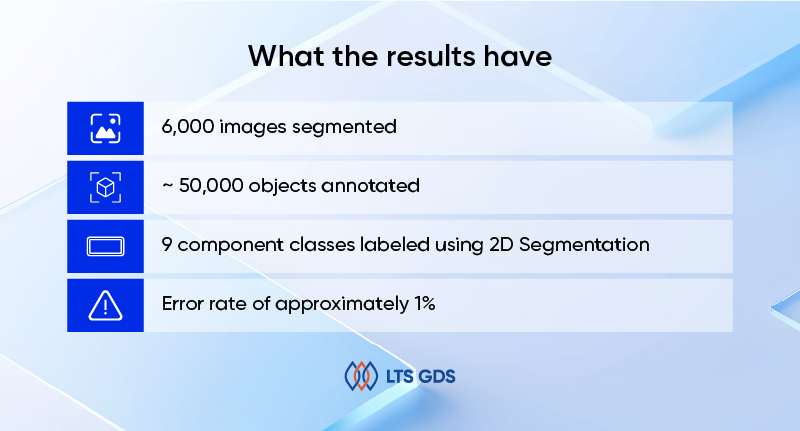

What the results have

At the end of the project, we achieved:

– 6,000 images segmented

– ~50,000 objects annotated

– 9 component classes labeled using 2D Segmentation

– Error rate of approximately 1%

– Total project duration: 5 months

The client received a clean, consistent dataset ready for computer vision model training.