Client overview

Our client is developing a computer vision system designed to monitor operational environments such as warehouses and manufacturing facilities. Their system focuses on detecting forklifts during active operations, particularly when forklifts are lifting pallets.

This use case is critical in industrial environments. Forklift–pallet interactions must be accurately interpreted to support safety monitoring, workflow optimization, and automated reporting. The AI model must understand not only object presence but also object posture, alignment, and lifting position.

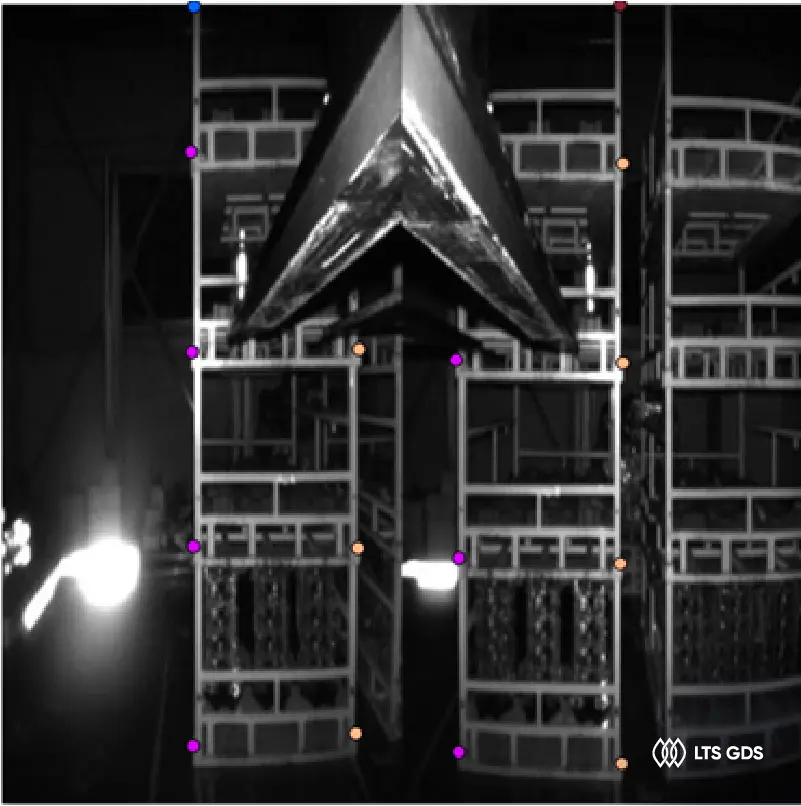

To achieve this, the client required high-precision 2D key points annotation to mark structural reference points on forklifts while they are actively lifting pallets.

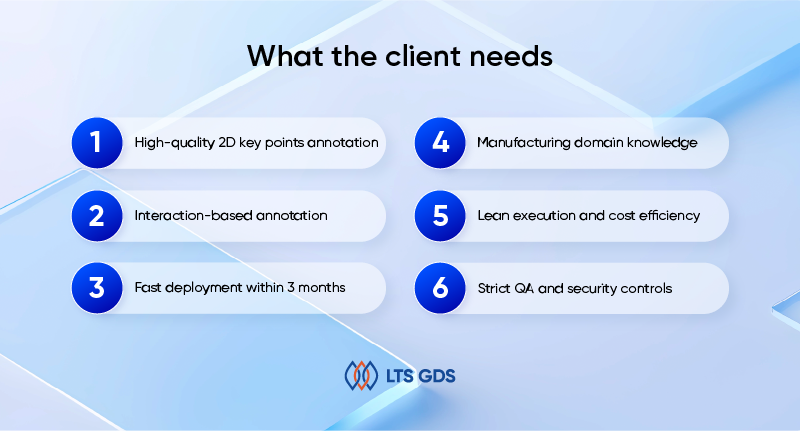

What the client needs

High-quality 2D key points annotation

The project required precise placement of key points on forklifts while they were lifting pallets. This included structural reference points such as forks, mast, wheels, cabin area, and pallet contact points.

Interaction-based annotation

The annotation target was not standalone forklifts or pallets. Instead, the focus was on forklifts actively lifting or carrying pallets. The relationship between the forks and pallet had to be clearly represented.

Fast deployment within 3 months

The dataset was large, and the timeline was fixed.

Manufacturing domain knowledge

Annotators needed to understand how forklifts operate in real environments. Misinterpreting lifting positions or pallet alignment could reduce model accuracy.

Lean execution and cost efficiency

The team structure needed to be efficient without unnecessary overhead.

Strict QA and security controls

Data confidentiality and accuracy tracking were mandatory.

How we did it

1. Selecting annotators with manufacturing domain understanding

We started by building a team of 15 annotators with prior experience in industrial or manufacturing-related annotation projects. Forklift operation is not random. Understanding how forks align with pallets, how lifting angles change under load, and how pallets are positioned in real warehouse settings was critical. Before production began, we aligned with the client on:

– Definition of forklift–pallet interaction states

– Key point positions required on forklifts

– Key structural markers (fork tips, fork base, mast top, wheel centers, pallet edges)

– Handling partial visibility

– Rules for occlusion

– Image rejection criteria

We conducted sample annotation rounds and refined guidelines until both sides agreed on placement consistency.

2. Focused training on 2D key points placement

2D key points require more precision than bounding boxes. Even small deviations can affect model performance. Training sessions covered:

– Structural anatomy of forklifts

– Fork alignment during lifting

– Correct key point placement on moving components

– Identifying load-bearing positions

– Handling perspective distortion

– Managing partially visible pallets

We used reference diagrams and real production images to ensure clarity. Each annotator completed pilot batches. QA leads reviewed these carefully and provided direct feedback. Only annotators meeting accuracy standards moved to full production.

3. Structured annotation execution

Once production began, we implemented a lean workflow to maintain speed without losing quality. Production process:

– Images were assigned in controlled batches

– Annotators marked required key points on forklifts lifting pallets

– Each image was self-reviewed before submission

– Batches were forwarded for QA review

Because forklifts often operate in cluttered environments, annotators needed to carefully distinguish between pallets being lifted and pallets stacked nearby. When uncertain cases appeared, annotators flagged them immediately. We avoided assumption-based labeling.

4. Multi-layer quality assurance

Quality control followed a structured multi-layer approach:

– Self-check: Annotators reviewed their own images before submission.

– Cross-review: Selected samples were reviewed by peers to detect inconsistencies.

– Vertical review by QA leads: QA leads examined batch-level patterns to identify systematic errors.

– Final validation before delivery: We randomly reviewed 30% of the labeled dataset before delivery.

5. Secure workflow and transparent reporting

All annotation work was completed directly inside the client’s CVAT platform. No image data was downloaded or stored externally. Access rights were strictly managed. We provided:

– Weekly progress updates

– Object counts per batch

– QA accuracy reports

– Escalation notes when needed

WhatsApp was used for quick clarifications, especially for edge cases involving unusual forklift angles or pallet placements.

Google Sheets ensured transparency in tracking volume and quality metrics.

What the results have

Within the three-month timeline, we delivered:

– 40,000 images annotated

– ~80,000 forklift and pallet objects

– Two classes: forklifts and pallets

– Annotation type: 2D key points

– Error rate: approximately 1.1%

– Project duration: 3 months

Despite the large scale, quality remained stable from the first month to final delivery.