Client overview

Our client is a South Korea–based AI company providing intelligent solutions across multiple industries. For this project, they were building a computer vision system focused on construction site safety monitoring. The system uses AI-powered cameras to detect workers, machinery, and safety equipment in real time. The goal is to identify potential hazards early and reduce accidents on active construction sites.

What the client needs

High-quality 2D bounding box annotation

All relevant objects on construction sites had to be labeled precisely. Bounding boxes needed to be tight, consistent, and aligned with the model’s training standards.

Clear identification of safety-related elements

– The dataset had to include both general objects and safety indicators. The model needed to recognize not just machinery and workers, but also safety conditions. Objects included person, driver, forklift, heavy equipment, mobile crane, load, carabiner attached, carabiner detach, safe band, and wire.

– Some classes required careful judgment. For example, distinguishing between a worker and a driver inside machinery, or identifying whether a carabiner was properly attached or detached.

Construction domain knowledge

Understanding how equipment operates and how safety gear is used was critical. Annotators needed to recognize unsafe configurations, not just object shapes.

Fast deployment within one month

The timeline was tight. The client needed rapid dataset preparation to continue model development.

How we did it

1. Clarifying safety logic and class boundaries

Before production started, we worked closely with the client to define clear rules for each class. Key discussions included:

– How to distinguish “person” from “driver”

– When to label heavy equipment versus forklift

– Rules for identifying mobile cranes in partial views

– How to determine “carabiner attached” vs. “carabiner detach”

– Minimum visibility threshold for labeling wires and safety bands

Additionally, we ran a pilot batch and reviewed the results with the client. This helped clarify gray areas, especially around safety equipment and attachment states.

2. Building a construction-aware annotation team

We assigned 20 annotators, selecting members with prior experience in construction or industrial datasets. Training sessions focused on:

– Common construction machinery types

– Safety harness systems and fall protection equipment

– Visual differences between attached and detached safety components

– Overlapping objects in crowded work zones

Annotators reviewed real construction scenarios to understand context. For example:

– A driver inside a forklift cabin

– Workers near suspended loads

– Safety wires partially hidden behind structures

Each annotator completed a qualification batch. Only those meeting internal quality thresholds moved to production.

3. Controlled production at scale

Given the one-month deadline, we divided the dataset into weekly batches. Each batch followed a clear process:

– Image assignment through the CVAT platform

– 2D bounding box annotation

– Self-check before submission

– QA review

– Feedback and correction if required

4. Multi-layer quality assurance

Quality control was embedded from the start through a structured, multi-step review process.

– Annotators completed self-checks before submission. Selected batches were then cross-reviewed by peers to detect inconsistencies. QA leads conducted deeper reviews to identify recurring issues such as misclassification (person vs. driver), incorrect safety labels, loose bounding boxes, or missed small objects like wires.

– Before delivery, we performed random sampling to confirm compliance with project standards. When repeated errors appeared, we paused related tasks briefly to clarify guidelines and prevent the issue from spreading.

5. Monitoring progress and maintaining transparency

Google Sheets was used to track:

– Daily image counts

– Object counts

– QA pass rates

– Error types

– Correction turnaround time

The client received regular updates on progress and quality metrics. This transparency built trust and allowed the client to monitor development without micromanaging the process.

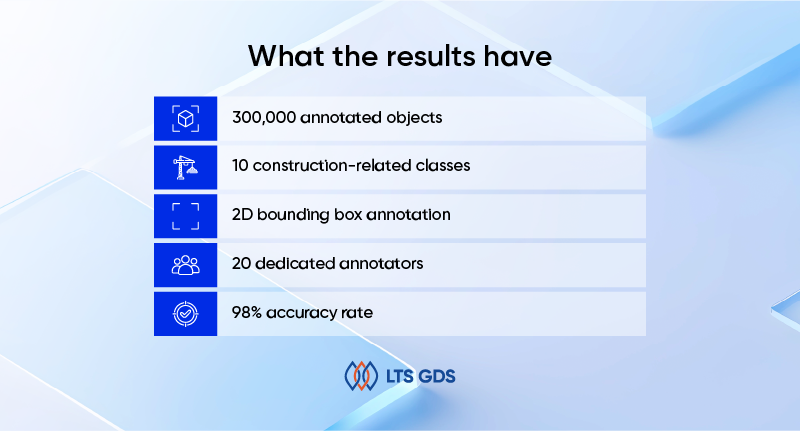

What the results have

Within one month, we delivered:

– 300,000 annotated objects

– 10 construction-related classes

– 2D bounding box annotation

– 20 dedicated annotators

– 98% accuracy rate

Despite the high object volume and complexity of construction environments, quality remained stable throughout production.